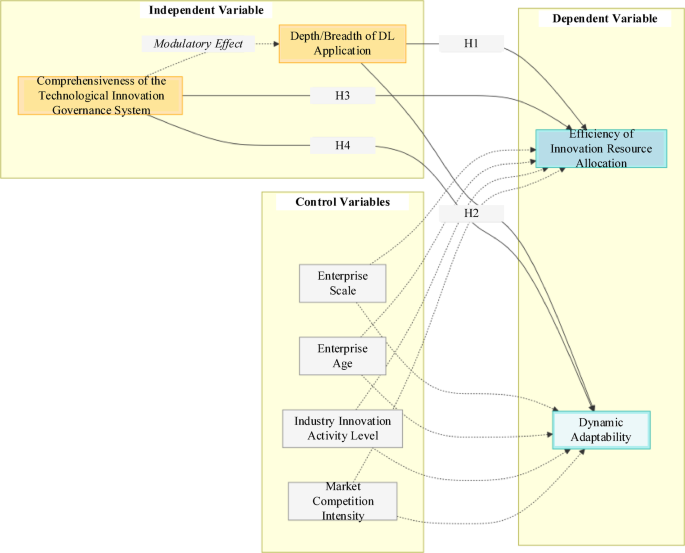

Analysis of deep learning-based technological innovation governance on the intelligent allocation of innovation resources in the high-technology industry

Data collection and parameter setting

This study constructs a comprehensive dataset to support experimental analyses. The dataset is primarily sourced from multiple public databases and internal enterprise data to ensure diversity and representativeness.

Standard & Poor’s (S&P) Global Market Intelligence Database: This database provides detailed financial information on publicly listed companies. Key financial indicators of high-tech enterprises, such as R&D expenditures, revenue, and asset data, are extracted. These indicators are crucial for understanding the enterprises’ financial inputs and scale in innovation activities, reflecting their investment in innovation resources. For example, R&D expenditure data can directly measure the financial resources allocated by enterprises to innovation projects, reflecting an enterprise’s commitment to technological advancement.

United States Patent and Trademark Office (USPTO) Database: This database offers extensive patent information. Patent application and authorization data from high-tech enterprises in relevant fields are collected, encompassing patent numbers, application dates, technology classification codes, and citation relationships. Patent data effectively reflects an enterprise’s innovation output and technological innovation capabilities. For instance, the number of patents in specific technological fields indicates an enterprise’s R&D achievements and competitive positioning within those areas.

Crunchbase Dataset: This dataset focuses on startup and emerging high-tech enterprises, offering information such as founding dates, industry classifications, funding rounds, and investor details. These data facilitate the analysis of innovation environments and growth trajectories of newly established high-tech enterprises. In particular, funding round data indirectly reflects the external resource support and market expectations for their innovation activities.

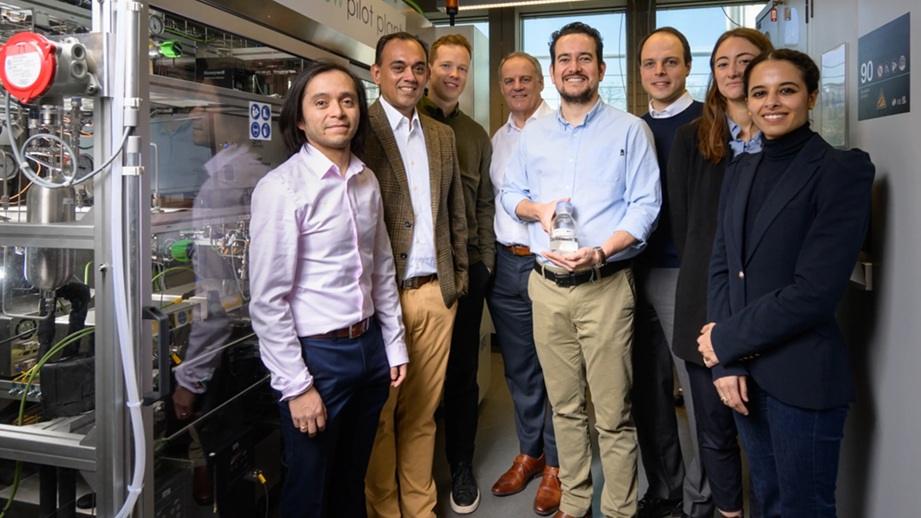

Additionally, this study establishes a data cooperation mechanism with seven sample enterprises to make the research results more accurate. It aims to obtain the full-volume operation logs of their innovation resource management systems from 2019 to 2023. These logs include non-public fields such as resource allocation decision flows, project progress tracking, and abnormal intervention records. After desensitization, this data is integrated with public data for modeling, which can enhance the granularity and credibility of enterprise-level practice analysis.

Data cleaning and preprocessing procedures are implemented to ensure data quality and completeness. Duplicate data is eliminated, while missing values are filled or corrected using appropriate interpolation methods. Furthermore, data standardization and normalization are applied to enhance consistency and compatibility for subsequent experimental analyses and model training. This multi-source dataset is systematically collected and processed. A robust analytical foundation is thereby established for accurately assessing the impact of DL-based technological innovation governance on intelligent innovation resource allocation in high-tech industries.

The experiments are conducted on a high-performance computing cluster comprising multiple compute nodes, each equipped with powerful Central Processing Units (CPUs) and GPUs. Specifically, the CPUs used are Intel Xeon Platinum processors, characterized by high clock speeds and multi-core capabilities; this enables them to handle complex data preprocessing and general computational tasks. The GPUs, such as NVIDIA Tesla V100 or higher-end models, are essential for accelerating the training and inference processes of DL models. Each GPU has a large memory capacity to store model parameters and intermediate results during training. The compute nodes are interconnected via high-speed InfiniBand networking technology, ensuring fast and seamless data transmission and communication between nodes. This hardware configuration efficiently handles large-scale datasets and supports the training of intricate DL architectures.

Model validation and result analysis

Cross-validation

To test the robustness of the SEM, this study adopts the K-fold cross-validation method (k = 5) to evaluate the goodness of fit. Cross-validation is employed to ensure the assessment results’ reliability and generalizability. The dataset is divided into k folds (k = 5). The model is trained k times, with each fold serving as the validation set in turn, while the remaining k-1 folds are used as the training set. Performance indicators are calculated for each fold, and the model’s final performance is the average of the k-fold performances. This method helps to reduce the impact of overfitting and provides a more accurate estimate of the model’s performance on unknown data. For the hyperparameter tuning process of the model, the study uses a Bayesian optimization framework instead of the traditional grid search. The learning rate ranges from [1e−5, 1e−2], the number of hidden layers ranges from [2, 6], and the Dropout rate ranges from [0.1, 0.5]. The convergence condition is met when the validation set loss decreases by less than 0.1% in consecutive iterations. Meanwhile, 120 groups of parameter combinations are evaluated in parallel on the NVIDIA A100 cluster.

The results of the cross-validation are presented in Fig. 2.

The results of the cross-validation.

In Fig. 2, the F1 score, precision, recall, and accuracy remain consistently stable across all folds. The average accuracy reaches 0.83, indicating that the model has a high prediction accuracy level. Additionally, the precision, F1 score, and recall fall within an acceptable range, suggesting that the model effectively identifies positive instances and maintains balanced predictive performance. These results confirm the model’s robustness, reliability, and strong generalization ability.

Comparison with baseline models

It is compared with several baseline models to evaluate the DL model’s superiority. These baseline models include traditional statistical models (e.g., linear regression, decision trees) and classical machine learning models (e.g., support vector machines). The performance of the DL and these baseline models is assessed using the same evaluation indicators and dataset. The adopted DL model architecture includes 3 hidden layers (with 256, 128, and 64 neurons in each layer, respectively). The study uses the ReLU activation function and a Dropout rate of 0.1 to prevent overfitting. The training process employs the Adam optimizer (with a learning rate of 0.001), conducting iterative training for 200 epochs on the NVIDIA A100 cluster with a batch size of 64. The early stopping mechanism is set to terminate training when the validation set loss does not decrease for 5 consecutive times. All hyperparameters are determined through a Bayesian optimization framework.

Figure 3 displays the results of the comparison with the baseline models.

The results of the comparison with the baseline models.

Figure 3 reveals that the DL model has a remarkable advantage in all evaluation indicators. Its accuracy, precision, recall, and F1 scores are all higher than those of the other baseline models, and it has a lower mean squared error (MSE). These results indicate that the DL model can make more precise predictions when handling innovation resource allocation data, demonstrating a stronger ability to handle complexity and provide accurate insights.

Sensitive analysis

A sensitivity analysis is conducted to investigate the model’s robustness and sensitivity. This analysis examines how variations in input variables or model parameters influence performance. For example, features used in the model, such as different types of innovation resources or external factors, can be altered. At the same time, changes in performance indicators can be observed. The results of the sensitivity analysis are exhibited in Table 2.

In Table 2, sensitivity analysis data demonstrate that incorporating external market demand factors significantly enhances various indicators related to input variables. The results highlight this variable’s positive effect on model prediction accuracy. In contrast, relying solely on human innovation resources or excluding financial innovation resources leads to a decline in performance. When adjusting hyperparameters, changes in the learning rate and the number of hidden layers affect model performance; Excessively high or too low learning rates and inappropriate numbers of hidden layers can cause fluctuations in key indicators. Carefully fine-tuning these parameters optimizes model performance, underscoring the critical role of precise parameter tuning in enhancing predictive accuracy.

Case study

To gain deeper insights into the practical application of DL-driven technological innovation governance in intelligent innovation resource allocation within high-tech industries, 15 representative high-tech enterprises are selected for case studies. These case studies further validate the reliability and generalizability of the previous quantitative research results while also uncovering unique mechanisms and practical experiences. The results of the case analysis are detailed in Table 3.

Table 3 suggests that enterprises with higher composite scores in the DL application depth/breadth and the completeness of technological innovation governance systems tend to perform better. For example, Enterprises 3 (new energy) and 11 (information technology) perform prominently in the DL application depth/breadth (scoring: 7) and the completeness of governance systems (scoring: 9.0/9.2). Their innovation resource allocation efficiency (0.78/0.80) and dynamic adaptability (0.85/0.88) are significantly higher than the sample averages (efficiency: 0.69, adaptability: 0.77). In-depth interviews with these two enterprises reveal that Enterprise 3 has shortened the new product R&D cycle by 30% through DL models. In contrast, Enterprise 11 has further reduced the market response time to less than 1/3 of the industry average with real-time resource scheduling algorithms. This result intuitively reflects the key role of DL methods in improving the effectiveness of resource allocation. In contrast, enterprises with relatively shallow DL applications or less well-developed technological innovation governance systems show slightly inferior performance in innovation resource allocation. This partially confirms the hypotheses regarding the relationships between variables in the previously constructed SEM. In other words, the DL application depth/breadth positively impacts the efficiency and dynamic adaptability of intelligent innovation resource allocation. Moreover, the completeness of the technological innovation governance system plays a moderating role, enhancing these positive effects. Additionally, the level of technological innovation activity and market competition intensity in different industries exert varying degrees of influence on enterprise innovation resource allocation. Thus, these can offer a reference for enterprises to formulate innovation resource allocation strategies based on their respective diverse industry environments. Through in-depth analysis of these case enterprises, common characteristics and best practices can be distilled from successful cases. For instance, it emphasizes the in-depth application of DL technology in multiple stages and establishes a comprehensive technological innovation governance system to cope with internal and external environmental changes. These insights offer practical, actionable guidance for high-tech enterprises, fostering the broader adoption and deeper integration of DL-driven technological innovation governance within the high-tech industry.

Robustness test

To enhance the rigor of causal inference, this study adopts the Difference-in-Difference (DID) method to design a quasi-natural experiment. The study utilizes the “AI Demonstration Enterprise” policy (implemented by a provincial science department in 2024) as an exogenous shock for causal identification. The treatment group comprises 10 enterprises mandated to adopt DL technology under this policy. The control group includes 10 matched enterprises (same industry/scale) not subject to the DL mandate. The final experimental results are detailed in Table 4.

Table 4 indicates that the coefficients of the core variable Treat × post are significantly positive in all three models (0.191–0.203, p < 0.01). This suggests that the innovation efficiency of enterprises in the treatment group increases by an average of 19.7–20.3% after the policy implementation. The results of the full and matched samples are highly consistent (R2 are all greater than 0.68), and the dynamic effect test shows that the treatment effect is persistent. Among the control variables, the impact of enterprise size is not significant, indicating that the efficiency improvement mainly stems from the application of DL technology rather than scale factors. The control of industry and quarterly fixed effects effectively eliminates the impact of potential confounding factors, enhancing the reliability of causal inference.

link